The Alpha Math Problem

Applying the bare minimum of education research to Alpha's claims of 2x learning

There’s a school in Texas that has received breathless coverage about its groundbreaking model where students learn from software instead of teachers, from Michael Horn, The Week, and NYTimes. There Wired.

Some claim it should be the future of learning - that no other system could possibly produce results like this.

Others ask- why is Alpha so successful even when other edtech is not?

I was curious about this - how are the results at Alpha so much better than most large education studies? So I dove in.

They are opening locations all around the country, including in my hometown of Chicago. So my kids attended a shadow day in a hotel in River North to get a firsthand feel for what the experience would be like.

I also visited the Alpha High School campus in Austin. Several current students and guides answered many of my questions. The students were impressive and shared all the creative ways they are learning and growing with the program.

I want to understand: what is it about Alpha school that seems to work - and what doesn’t? And what can we learn from the program to apply to other progams?

Research written by a bot

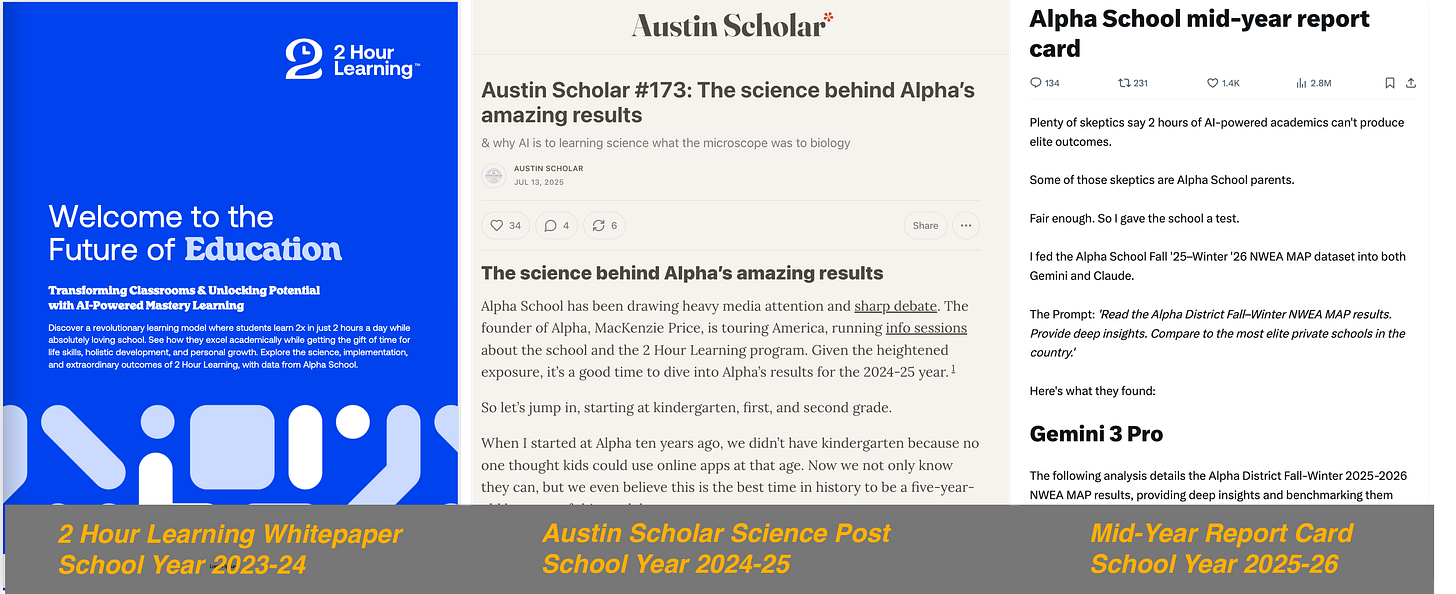

I could find only three solid sources of data about the performance of Alpha school students: the official white paper, a blog post from last summer, and Joe Liemandt’s recent mid-year report card tweet.

Recent reports were all written by LLMs. In both cases, someone from Alpha School entered their raw school data to either Claude or Gemini, and then copy/pasted the results into a post. These are the three sources:

The Official Alpha 2 Hour Learning Paper (2024)

The official Alpha White Paper covers data up through 2024. It includes attainment in both elementary and high school at Alpha Austin, growth data from NWEA MAP, and a few cherry-picked examples from the Brownsville campus.

The Austin Scholar Post (2025)

The 2024-25 data published in a blog post on Austin Scholar blog last summer. Most of the post is LLM rehash - Austin said “I uploaded the K-2 class results from NWEA’s MAP test to Claude Sonnet 4 Deep Research”. He provided the original NWEA MAP School Summary PDFs so we can see the raw school results.

The Alpha Mid-Year Report Card (2026)

More recently, the founder Joe Liemandt tweeted out an LLM-generated 2,500-word analysis as their 2025-26 mid-year report card. The post includes rehash from Gemini and Claude.

This is not an actual research paper or even one that was written by a human. We all have seen how chatbots aim to please and will make overconfident claims that gloss over problems.

Since we’re just using AI for analysis, I plugged the Mid-Year Report card into Gemini and asked it to highlight questions to ask. I asked Gemini, “what are the questions you would ask the researchers to really understand the mid-year report on Alpha School?”

Here was its response:

This report is statistically staggering, but in the world of educational data, “too good to be true” usually means there is a hidden variable in how the data was collected or filtered.

As a researcher, I wouldn’t just look at the scores; I would look at the denominator. Here are the two critical questions I would ask the Alpha School leadership to determine if these results are a breakthrough in AI instruction or a masterpiece of data selection.

What is the “Matched Student” count? How many students were excluded from the growth data because they left the school or missed a test window between Fall and Winter?

What is the baseline cognitive ability (IQ) of the cohort? Are these results the product of the AI’s instruction, or is this simply a group of students who were already in the 99th percentile of innate ability upon admission?

Both of these questions focus on aspects of selection bias.

Selection bias is the most powerful force in education

Ten years ago, I was CTO at an education company. We built personalized learning software that provides students an adaptive path. I spent many hours pouring over standardized test results from school districts across the country. And I recall one powerful article - why selection bias is the most powerful force in education. Whenever you try to understand a particular education statistic, you have to understand: how was the underlying sample constructed? Who was chosen to participate? And how might that skew the results?

Despite the national profile, Alpha is a very small program. Nationally, they serve only a few hundred students. Only 154 PK-8 students made up the sample for the 2024-25 results discussed above. In such a small sample, averages can easily skew based on relatively small factors.

So who is in the sample? We know a few factors that may bias the selection:

Parental Income. At the flagship location, $40,000 a year, and at some others even higher - Alpha Chicago will charge $55,000! Parental income is highly correlated with standardized test performance, so having a high tuition would lead to higher results on tests. As Dan Meyer said, “They have replaced poor kids with rich kids”

Shadow Day Selection. Alpha students are not technically selected on the basis of a placement test (that’s what the Alpha GT is for). But the school is still choosy. Students are required to come for a shadow day, where students spend a few hours on educational apps, where their academic level is assessed. Guides also look for kids who are good communicators, respond well to feedback, and fit in well with the community.

Expulsion. Like all private schools, Alpha can choose to counsel students out. I asked at an info session, why would you counsel a student out of the school (i.e., expel them)? She said that if a student is struggling, it’s never the student’s fault. Still, if parents aren’t supportive of the school’s methodology, then they may be asked to leave.

And if they leave before the NWEA spring testing window, then their results won’t count in the growth stats.

Only Alpha Austin. Alpha has numerous locations around the country, some which have been open for more than a year. Yet, the only public datasets I’ve seen have come from the flagship Austin location.

It goes without saying - that unlike public schools, no private school HAS to offer support to students who require special education, or have behavior challenges, or any number of other potential obstacles to their education. Alpha can choose to serve a small number of high achieving, rich students with strong parental support who are already committed to their model.

It is all fine to create another model to serve those with lots of support But it doesn’t tell us much about whether this extends to those who aren’t already successful.

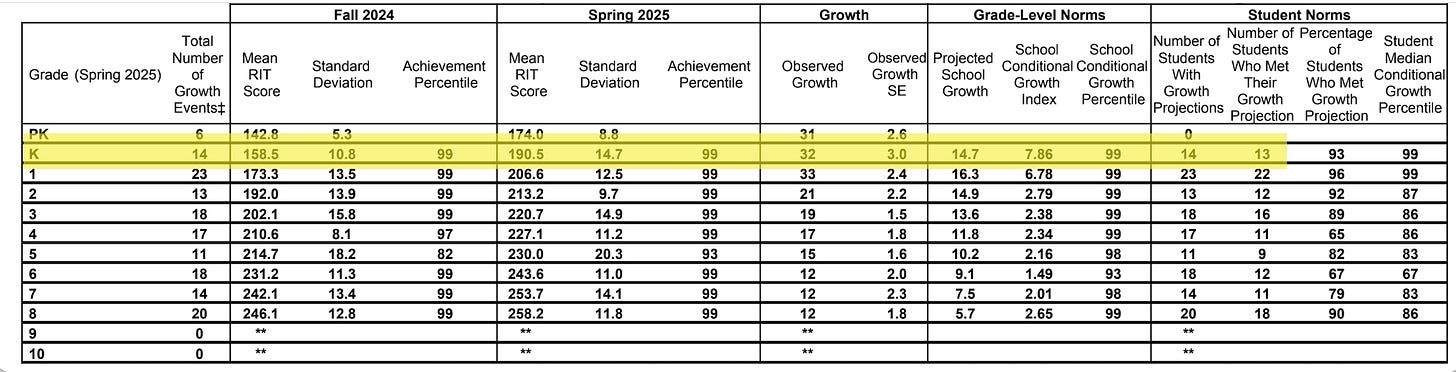

Alpha students come IN testing at the 99th percentile

One thing we know: on average, most of the incoming class in Fall 2024 was already testing at the 99th percentile. The average RIT score is a full grade level ahead.

For the older kids, maybe they test so well because they’ve already been at Alpha School for years. But we see this effect in the four lowest grades: K-3 are all coming in at the 99th percentile.

If Kindergarten students come in to the school already demonstrating that they are excellent test-takers, then it’s not as surprising that they continue to test well in the spring.

How do we measure learning?

One of the best parts of Alpha is the belief that students can do things well beyond what’s typically expected of their age. Students build companies. They write musicals. They create all sorts of incredible projects in the community.

Despite all this grandiose rethinking, Alpha primarily uses a very traditional metric for success: standardized tests. For K-8, this means NWEA MAP and for high SAT and AP tests for high school. They also use other metrics, such as surveys about whether students like school, but their primary optimization is for test scores.

Students are all hyper aware of their test scores and work to optimize them. At the walkthrough, each student touted out their own SAT scores - not only juniors and seniors, but freshmen as well. The Timeback system for little kids incorporates their NWEA scores front and center on the main dashboard.

Yet, despite the core focus on test scores, their actual analysis is weak and mostly powered by LLMs. The white paper that they have released has major errors. These are best interpreted as marketing, and certainly should not be compared to peer reviewed, third party research.

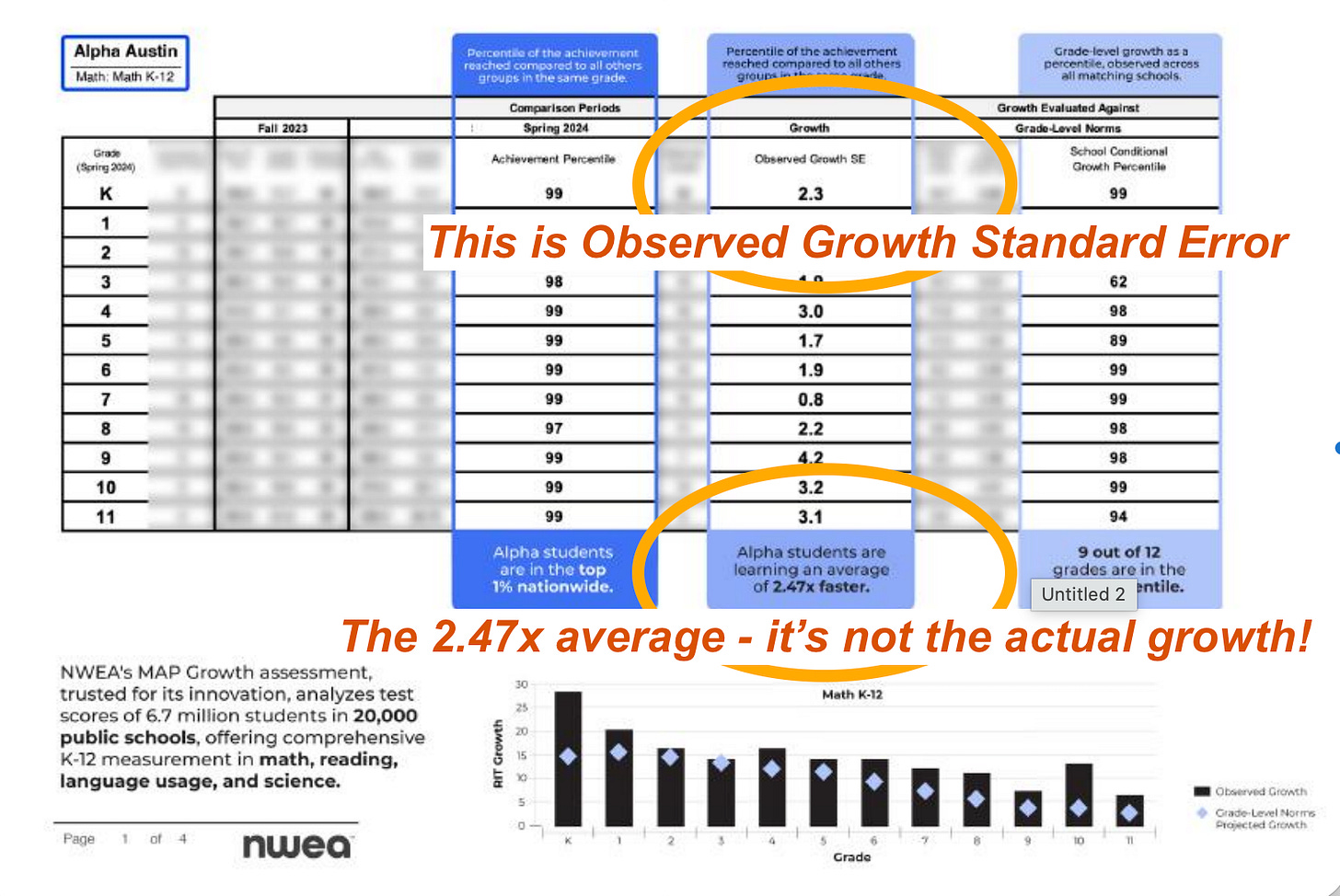

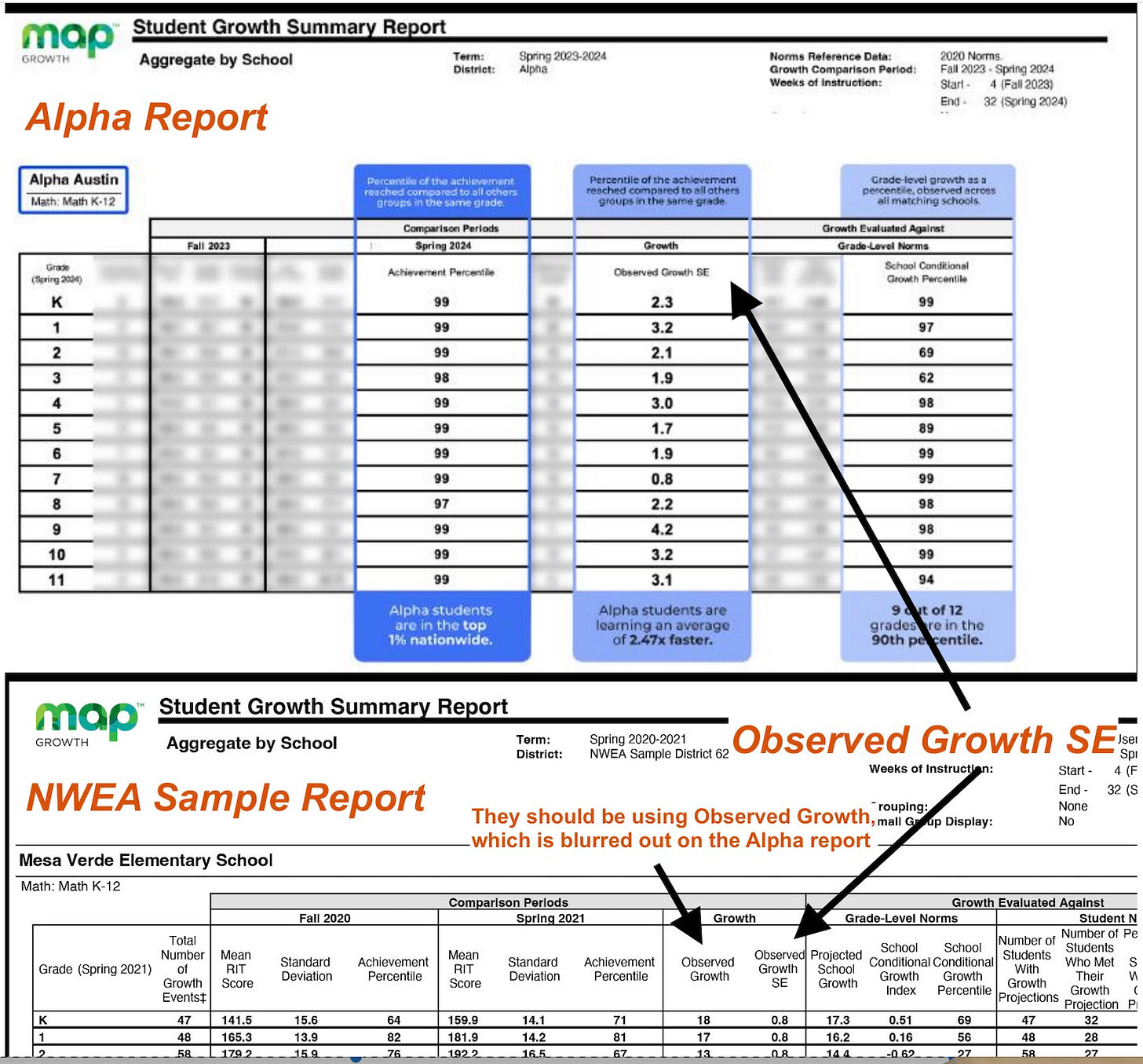

Using the wrong column for growth

When I first heard of Alpha School, I wanted to know: how did they get such great growth numbers? I read the white paper. And as I read the blurry reports that form the centerpiece of their claims, it didn’t quite make sense. I’ve looked at many School Summary reports in my career, and this one was missing a critical column - the growth itself.

Here is an NWEA summary page from the original white paper. Alpha’s annotations claim that this shows 2.5x growth. They claim that when averaged, it shows that “Alpha students are learning an average of 2.47x faster”.

I noticed that the data points on the bar graph on the bottom didn’t match the data points on the highlighted column. This is because it’s the wrong column!

The chart above shows the average of the growth standard error! It’s not even the real growth number! Check out the official NWEA MAP definitions page to see that there are two columns:

Observed Growth - Average change in RIT scores from starting term to ending term. This is the column they should be using.

Observed Growth SE - Growth standard error (SE) associated with term-to-term growth for the group.

Here I place the Alpha report next to the NWEA sample so you can see - they have blurred the most useful column, and instead chosen to average a meaningless statistical measure of error rather than the correct data.

An error like this is huge. It should have been caught in early review. Yet, it wasn’t - and in fact that error serves as the basis for the 2x learning claim.

In truth, when I looked at the data for 2023-24 school year, the actual learning gain was closer to 1.3x in math and 1.6x in reading. For the 2024-25 results, the learning gain is a little higher - on average closer to 1.7x.

Does this matter? I mean, these are fantastic results! Kids at Alpha certainly do great on standardized tests. If you want your kid to do well on standardized tests, then Alpha might be a good place to go.

Alpha School should enlist an external researcher

The future of education is a huge open question. LLMs are exponentially improving, getting closer to AGI every day. How will students learn today and in the future? What works best? And what doesn’t work?

Alpha has popularized many ideas about the future of education. In many ways they have made mainstream the vision of a personalized learning software that knows each student and guides them through the school day. I love that they believe in their students and help them accomplish goals that seem out of reach for their age. I love the supportive community. And they are pushing the boundaries and driving innovation on the use of software to track progress and implement learning.

Most private schools don’t publish data to the degree that Alpha does- but also, most private schools don’t claim to be the future of education. When a school makes outsize claims of once-in-a-generation learning, then they should back those up with rigorous research to support it. They shouldn’t leave obvious statistical errors in their prominent results paper.

If Alpha sees its model as a blueprint for the future of learning, then they must move beyond LLM-generated report cards and cherry-picked datasets. They should open themselves up to proper peer review from respected researchers, and publish complete datasets that let the public evaluate their efficacy. Here are the three must-dos that would help me better trust the efficacy claims from Alpha:

Third Party Publication. When companies publish their own reports, they see a much more positive effect than neutral third parties. I would love to see a report from a respected university professor or researcher who looks at the data and does an independent analysis, following education research best practices including sharing methodology and samples.

Diverse Populations. I am so curious - what does the Alpha School data look like for Miami? How about the Texas Sports Academy or their Brownsville location? Does 2 Hour Learning show repeated results across different contexts? How does it work in the Unbound Charter school in Arizona?

Incoming Student Profile & Attrition. What are the test scores for the incoming students? What are the results including those who left the school throughout the year?

We don’t need a randomized controlled trial. Simple transparency and external validation would go a long way. If Alpha continues to show strong results across multiple locations and contexts, then they should follow the best practices in education research to build trust that the model can generalize beyond just the elite private school market.

Wow - the egregious error should have been caught by those reporting out on their results too. Thanks for digging into this. Selection bias was my first thought so wild that not only are they 99% coming in but the growth isn’t even real. The amazing thing is edtech like differentiated learning apps has been proved to work if the measure is as simple as NWEA test scores. I think the real question is whether that’s the right rubric for growth anymore!

While I agree they should hire an external researcher (though only if they could be impartial)… I think they lost my business when they said it was 55k per child. We already pay 30k in Evanston whether we want to or not. So that would be 85k for an education I could deliver myself for around 100/month in token spend.